First Principles Thinking: Build Intelligence, Don’t Hoard Facts

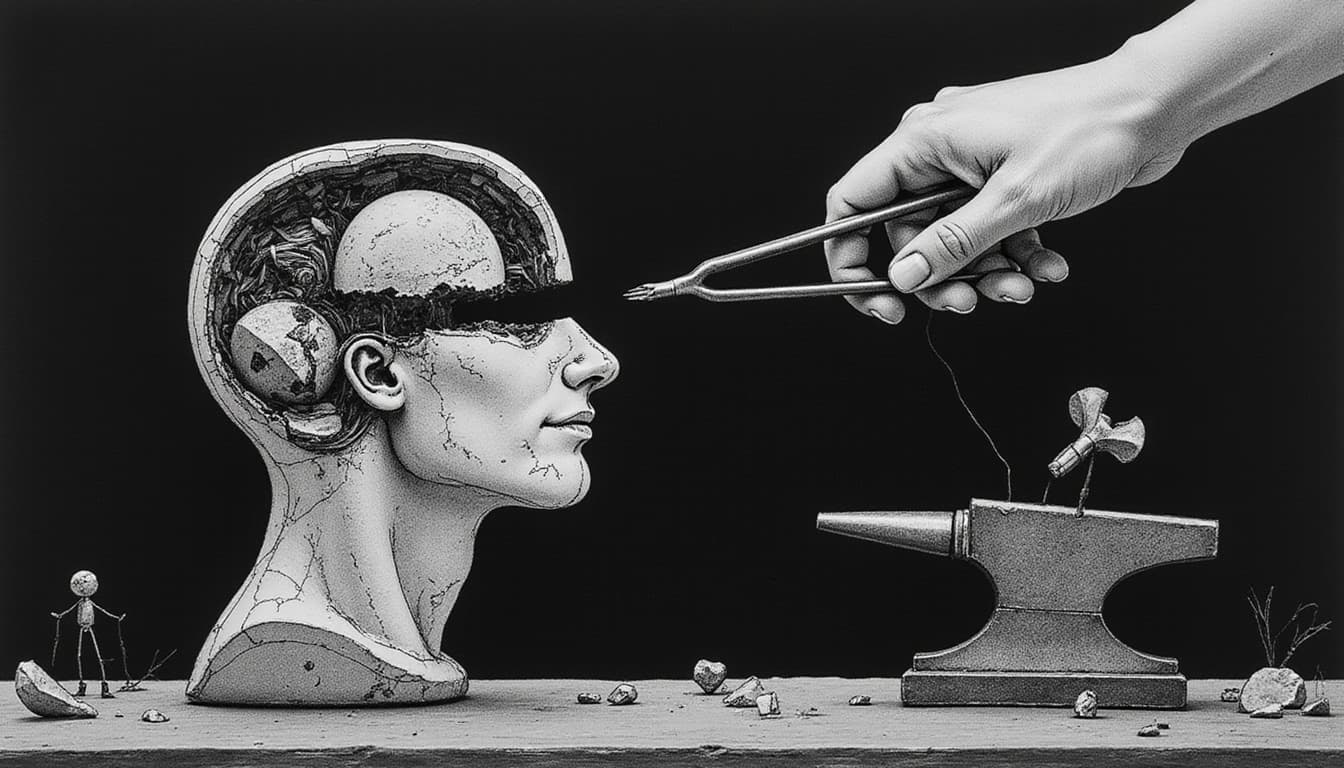

Intelligence isn’t how much you’ve stored; it’s how well you can rebuild. The smartest people I’ve met treat their thinking like a lab: strip ideas to essentials, make a clear claim, then try to break it.

Intelligence in practice is the habit of reducing problems to first principles, stating a clear, testable explanation, and updating that explanation whenever new evidence or contradictions appear. Feynman emphasizes building from fundamentals; Popper emphasizes falsification. The result is clarity that improves with each correction.

See the trap of “more facts”

A resume of courses feels safe; a clean explanation feels exposed. Early in my career, I hoarded articles and notes, convinced more input meant better output. It didn’t. When pressed to explain a decision, I reached for citations instead of causes.

The illusion is simple: more inputs look like progress. But knowledge without an integrating explanation is brittle. When reality pushes back, customers behave differently, a forecast misses, fact piles don’t tell you what broke. Explanations do. They reveal which assumption failed.

Intelligence isn’t a library; it’s a workshop. Facts are materials. Explanations are builds. Tests are where intelligence shows up.

Apply first principles thinking

A physics move is our starting stance: reduce to what must be true, then reason upward. You name the phenomenon plainly, “Churn spiked last month.” Then you strip to basics: “People cancel when perceived value drops below perceived cost.” Finally, you identify the few variables that actually drive the effect: “Value signals include outcome speed, reliability, and support responsiveness.”

Feynman’s bias was to explain simply enough that you can’t hide confusion in wording. If you can’t teach the essence in clear, everyday terms, you’re not done reducing yet.

Use falsification to revise

I used to search for confirming data. Popper turns that impulse on its head: make a claim that could be wrong, then go looking for the quickest disproof. This approach keeps the loop honest through three moves.

First, state a crisp claim: “If onboarding time drops by half, seven-day retention will rise.” Next, define a specific failure condition: “If retention doesn’t move within two release cycles, onboarding wasn’t the binding constraint.” Finally, run the fastest, cheapest test: “Ship a guided checklist to first-time users and measure cohort behavior.”

Disproof isn’t defeat, it’s direction. It tells you where your explanation is thin and where to rebuild. Over time, the habit of asking “What would prove this wrong?” protects you from wishful thinking and sunk-cost drift.

See it in practice

The mechanics matter, so keep them concrete. A product team assumed their feature failed because it lacked depth. Reduced to first principles, they reframed: value equals time-to-first-win multiplied by reliability. They built a claim: “Reduce time-to-first-win from minutes to seconds and net promoter responses improve.” They shipped one small change, a preloaded template. When the improvement didn’t move, they revised the explanation: the real constraint was reliability, not speed.

Similarly, a sales manager believed “more calls” would fix the quarter. Reduced: pipeline health depends on fit, timing, and proof. They made a disprovable claim: “Adding one fit screen will increase meeting-to-opportunity conversion.” They tested for two weeks; no lift. The revision led to building stronger proof through customer stories, which did move conversion.

As a consultant, I once recommended a roadmap based on four case studies I admired. A client asked me to write the one-sentence claim my plan depended on. I wrote it, then we listed two ways it could fail. One did, immediately. We rebuilt the plan in a day rather than losing a quarter. That habit stuck.

Make it a habit

You don’t need new tools to do this; you need a tighter thinking loop. Keep a claims ledger with one-sentence explanations you’re currently betting on, each with a clear failure condition. When you change your mind, note why. Run the “teach it plain” test by explaining your current thinking to a peer with no jargon. If they can’t restate it, reduce further.

Prefer smallest decisive tests by choosing the next step that most threatens your favorite idea. If it survives, confidence is earned, not declared. Separate materials from builds by tagging notes as “materials” and explanations as “builds.” Most people mix the two and mistake volume for rigor. Make correction emotionally cheap by treating being wrong as a receipt for learning. If you frame disproof as failure, you’ll avoid the very tests that would make you sharper.

Clarity compounds when you treat mistakes as information. The point isn’t to be right on the first pass; it’s to become less wrong with each pass.

Close the loop

The physics mindset travels well: question, reduce, test, revise. Feynman gives the humility to rebuild from bedrock. Popper gives the courage to look for the crack. Together they replace brittle certainty with robust clarity.

Intelligence is the craft of making explanations that survive contact with reality, and rebuilding them when they don’t.